# Workopia - Complete Documentation

This file contains all documentation concatenated into a single file for easy consumption by LLMs.

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

## Table of Contents

This document includes all content from this project.

Each section is separated by a horizontal rule (---) for easy parsing.

---

# Account-setting

URL: https://workopia.io/account-setting

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Applications

URL: https://workopia.io/applications

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Ai-resume-tailor-every-job

URL: https://workopia.io/blog/ai-resume-tailor-every-job

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Best-free-mcp-servers-job-search-2026

URL: https://workopia.io/blog/best-free-mcp-servers-job-search-2026

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# How-to-search-jobs-with-ai-using-mcp

URL: https://workopia.io/blog/how-to-search-jobs-with-ai-using-mcp

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Workopia-vs-career-ops-ai-job-search

URL: https://workopia.io/blog/workopia-vs-career-ops-ai-job-search

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Career-pivot

URL: https://workopia.io/career-pivot

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Case-strategy

URL: https://workopia.io/case-strategy

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Keys

URL: https://workopia.io/dashboard/keys

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Future-vision

URL: https://workopia.io/future-vision

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Interview-vault

URL: https://workopia.io/interview-vault

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Jobs

URL: https://workopia.io/jobs

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Library

URL: https://workopia.io/library

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Workopia

URL: https://workopia.io

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Payment-success

URL: https://workopia.io/payment-success

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Pricing

URL: https://workopia.io/pricing

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Privacy

URL: https://workopia.io/privacy

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Profile

URL: https://workopia.io/profile

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# David-jones

URL: https://workopia.io/resources/interview-tips/christmas-casuals/david-jones

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# David-jones-logistics

URL: https://workopia.io/resources/interview-tips/christmas-casuals/david-jones-logistics

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# How-to-ace-christmas-casual-job-interviews-in-australia

URL: https://workopia.io/resources/interview-tips/christmas-casuals/how-to-ace-christmas-casual-job-interviews-in-australia

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Hugo-boss

URL: https://workopia.io/resources/interview-tips/christmas-casuals/hugo-boss

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Jb-hi-fi

URL: https://workopia.io/resources/interview-tips/christmas-casuals/jb-hi-fi

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Lululemon

URL: https://workopia.io/resources/interview-tips/christmas-casuals/lululemon

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Muji

URL: https://workopia.io/resources/interview-tips/christmas-casuals/muji

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Myer

URL: https://workopia.io/resources/interview-tips/christmas-casuals/myer

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Christmas-casuals

URL: https://workopia.io/resources/interview-tips/christmas-casuals

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Ralph-lauren

URL: https://workopia.io/resources/interview-tips/christmas-casuals/ralph-lauren

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Walk-in-job-hunting-guide-australia

URL: https://workopia.io/resources/interview-tips/christmas-casuals/walk-in-job-hunting-guide-australia

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# David-jones-christmas-casual

URL: https://workopia.io/resources/interview-tips/david-jones-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# David-jones-logistics-christmas-casual

URL: https://workopia.io/resources/interview-tips/david-jones-logistics-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Anz-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/anz-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Bhp-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/bhp-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Commbank-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/commbank-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Deloitte-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/deloitte-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Ey-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/ey-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Kpmg-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/kpmg-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Macquarie-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/macquarie-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Nab-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/nab-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Finance-strategy

URL: https://workopia.io/resources/interview-tips/finance-strategy

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Pwc-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/pwc-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Telstra-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/telstra-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Westpac-finance

URL: https://workopia.io/resources/interview-tips/finance-strategy/westpac-finance

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Atlassian

URL: https://workopia.io/resources/interview-tips/graduate-interns/atlassian

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Canva

URL: https://workopia.io/resources/interview-tips/graduate-interns/canva

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Commonwealth-bank

URL: https://workopia.io/resources/interview-tips/graduate-interns/commonwealth-bank

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Deloitte

URL: https://workopia.io/resources/interview-tips/graduate-interns/deloitte

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Deloitte-consulting

URL: https://workopia.io/resources/interview-tips/graduate-interns/deloitte-consulting

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Deloitte-data

URL: https://workopia.io/resources/interview-tips/graduate-interns/deloitte-data

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# How-to-ace-graduate-program-interviews-australia

URL: https://workopia.io/resources/interview-tips/graduate-interns/how-to-ace-graduate-program-interviews-australia

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Macquarie

URL: https://workopia.io/resources/interview-tips/graduate-interns/macquarie

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Graduate-interns

URL: https://workopia.io/resources/interview-tips/graduate-interns

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Pwc-audit

URL: https://workopia.io/resources/interview-tips/graduate-interns/pwc-audit

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Pwc-consulting

URL: https://workopia.io/resources/interview-tips/graduate-interns/pwc-consulting

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Pwc-technology

URL: https://workopia.io/resources/interview-tips/graduate-interns/pwc-technology

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Telstra

URL: https://workopia.io/resources/interview-tips/graduate-interns/telstra

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Telstra-government

URL: https://workopia.io/resources/interview-tips/graduate-interns/telstra-government

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Ubs

URL: https://workopia.io/resources/interview-tips/graduate-interns/ubs

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Hugo-boss-christmas-casual

URL: https://workopia.io/resources/interview-tips/hugo-boss-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Jb-hi-fi-christmas-casual

URL: https://workopia.io/resources/interview-tips/jb-hi-fi-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Lululemon-christmas-casual

URL: https://workopia.io/resources/interview-tips/lululemon-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Muji-christmas-casual

URL: https://workopia.io/resources/interview-tips/muji-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Myer-christmas-casual

URL: https://workopia.io/resources/interview-tips/myer-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Ralph-lauren-christmas-casual

URL: https://workopia.io/resources/interview-tips/ralph-lauren-christmas-casual

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Afterpay-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/afterpay-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Airwallex-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/airwallex-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Anz-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/anz-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Atlassian-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/atlassian-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Bhp-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/bhp-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Canva-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/canva-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Carsales-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/carsales-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Coles-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/coles-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Commbank-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/commbank-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Domain-group-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/domain-group-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Domain-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/domain-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Macquarie-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/macquarie-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Nab-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/nab-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Tech-interviews

URL: https://workopia.io/resources/interview-tips/tech-interviews

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Rio-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/rio-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Rio-tinto-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/rio-tinto-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Seek-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/seek-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Seek-tech-interview-v2

URL: https://workopia.io/resources/interview-tips/tech-interviews/seek-tech-interview-v2

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Telstra-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/telstra-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Westpac-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/westpac-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Woolworths-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/woolworths-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Xero-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/xero-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Zip-co-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/zip-co-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Zip-tech-interview

URL: https://workopia.io/resources/interview-tips/tech-interviews/zip-tech-interview

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Resources

URL: https://workopia.io/resources

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Resume-lab

URL: https://workopia.io/resume-lab

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Sign-in

URL: https://workopia.io/sign-in

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Terms

URL: https://workopia.io/terms

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Trends

URL: https://workopia.io/trends

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# Workflow

URL: https://workopia.io/workflow

> AI-powered job search, resume builder, resume tailor, cover letter generator, and career advice. Free MCP server for Claude Desktop, ChatGPT, OpenClaw, Cursor, and any MCP-compatible AI assistant.

---

# The Modern ML Engineer: 2026 Market Analysis, Skill Blueprint, and Career Pivot Guide (part 2)

Source: articles/career-pivot/da-to-ml-engineer-career-switch.mdx

Machine Learning Engineering has become the most critical — and most compensated — bridge role in enterprise technology.

As AI moves from research labs into production infrastructure, organisations no longer just need people who can train models.

They need engineers who can deploy them, monitor them, scale them, and keep them running at 24/7 reliability.

Market Snapshot 2026:

- $187,500: US median ML Engineer salary

- 78%: of postings are mid-level roles

- 43%: of US jobs in California + New York

- $350K+: senior / principal total compensation

Figure 1: Comparison of market demand and salary growth projected through 2026.

5. The Pivot Playbook: Data Analyst to ML Engineer

The path from Data Analyst to ML Engineer is one of the most structurally logical career pivots in technology.

The analytical foundations are directly transferable. The gap is specific and learnable.

And the compensation uplift — from a typical analyst range of $70K–$100K to mid-level ML Engineer rates of $150K–$220K — is among the highest available in a single career transition.

| ✓ What You Already Bring |

→ What You Need to Build |

| Data cleaning, preprocessing, and feature engineering fundamentals |

SQL fluency for pipeline construction and data querying |

| Statistical reasoning: probability, hypothesis testing |

MLOps stack: Docker, Kubernetes, AWS SageMaker |

| Exploratory data analysis (Tableau / Power BI) |

GenAI stack: LLMs, RAG architectures, Prompt Engineering |

6. A Structured 12-Month Pivot Timeline

Months 1–3 — Production Python

Move from notebook scripting to production-grade code. Learn testing (pytest) and Git.

Months 3–6 — MLOps Foundations

Docker, Kubernetes basics, and one cloud ML platform (AWS/Azure).

Months 6–12 — GenAI & Portfolio

Build a working RAG application. Prepare for system design interviews.

This article is part of the Career Pivot Navigator series from HéraAI.

---

# From Intern to Principal: The Complete Data Science Career Playbook for 2026

Source: articles/career-pivot/data-scientist-cheatsheet-2026.mdx

The data science job market in 2026 is defined by a paradox: unprecedented demand for talent, yet increasing barriers to entry for newcomers. This playbook breaks down exactly what you need at each career stage.

The 2026 Landscape

According to industry reports, the data science field continues to evolve at a rapid pace. Companies are seeking professionals who can bridge the gap between technical implementation and business impact.

34%

Projected growth through 2034

$112,590

Median annual wage

Career Progression Framework

Career Progression Framework

Junior Data Scientist (0-2 years)

- Focus on technical fundamentals

- Master Python, SQL, and core ML algorithms

- Build a strong portfolio with end-to-end projects

- Expected salary range: $75K - $95K

Senior Data Scientist (3-5 years)

- Lead cross-functional projects

- Develop business acumen

- Mentor junior team members

- Expected salary range: $130K - $180K

Principal Data Scientist (5+ years)

- Define technical strategy

- Influence company-wide data culture

- Drive organizational decision-making

- Expected salary range: $200K - $280K+

Key Skills for 2026

Technical Skills

- • LLM fine-tuning and prompt engineering

- • MLOps and deployment pipelines

- • Causal inference and experimentation

- • Cloud platforms (AWS, GCP, Azure)

Business Skills

- • Domain expertise in specific industries

- • Stakeholder communication

- • Project management

- • Strategic thinking

The path forward: The journey from intern to principal requires not just technical growth, but also the development of business intuition and leadership skills. Focus on measurable impact and continuous learning to accelerate your career progression.

```

---

# The $200K Pivot: GenAI Engineer Career Guide 2025

Source: articles/career-pivot/genai-engineer-career-pivot.mdx

General software hiring is cautious. AI engineer demand is at fever pitch. The gap between those two sentences is the largest career opportunity in the current market — and it is accessible without a PhD.

By Carrie Yu · HéraAI · March 18, 2025

The current conversation about AI is dominated by displacement anxiety. The data tells a different story. What is happening in the labour market is not job erasure — it is job evolution, and the demand curve for engineers who can implement AI in production has effectively decoupled from the rest of the software hiring market. General tech hiring remains cautious. AI engineer hiring is accelerating.

The $200,000 average salary for Generative AI Engineers in 2025 reflects a genuine scarcity of talent that can move beyond API wrappers into production-grade AI systems — engineers who understand RAG architecture, context engineering, orchestration frameworks, and AI safety. The market is pricing that combination at a premium because it is structurally rare, and because the demand for it is arriving from every industry simultaneously.

{/* Stats Cards */}

$200K

average Generative AI Engineer salary in 2025 — range $140K–$260K

+90%

projected role growth over the next decade — one of the steepest demand curves in tech

6–18mo

realistic pivot timeline from backend, QA, or BI foundations to deployable AI engineer

RAG

Retrieval-Augmented Generation — the architecture that separates wrappers from real applications

{/* Image placeholder — replace filename */}

AI Researcher vs. AI Engineer: The Distinction That Opens the Market

The most persistent barrier to entering AI engineering is a misconception: that the field requires the ability to invent new neural architectures. It does not. A fundamental distinction has emerged in the 2025 market between the AI Researcher — who trains models from scratch — and the AI Engineer, who builds production applications using pre-trained models and existing AI tooling. These are different professions with different entry requirements.

| Dimension |

AI / ML Researcher |

AI Engineer ✓ The Pivot Path |

| Primary output |

Novel model architectures, academic papers, SOTA benchmark improvements |

Production applications that deliver measurable business value using existing models |

| Relationship to models |

Designs and trains models from scratch; requires deep mathematical foundations |

Selects, integrates, and optimises pre-trained models; focuses on application architecture |

| Typical background |

PhD or research Master's in ML, mathematics, or computational statistics |

Strong engineering fundamentals; Python proficiency; API and system integration experience |

| Market demand (2025) |

Concentrated at frontier labs (OpenAI, Anthropic, Google DeepMind, Meta AI) |

Broad demand across every industry sector integrating AI into products and workflows |

| Compensation ceiling |

$350K–$500K+ at frontier labs; highly concentrated and competitive supply |

$140K–$260K average; $200K median; rapidly expanding demand across non-frontier employers |

| Accessible via pivot? |

No — requires multi-year research training from foundational principles |

Yes — engineers with strong backend, QA, or BI foundations can pivot within 6–18 months |

The reframe that changes the calculus for every mid-career engineer: You are not tasked with reinventing the wheel. You are building the vehicle that uses the wheel to deliver enterprise value. The AI Engineer's job is implementation: selecting the right model for the task, building the architecture that feeds it the right data, ensuring it operates safely at production scale, and connecting its outputs to the business workflow that creates value. This is an engineering problem, not a research problem — and it is accessible to anyone with strong engineering fundamentals.

The Trojan Horse Strategy: Your Existing Skills Are the Entry Ticket

Strategic career switchers understand that they are not starting from zero. The IT skills that define competency in traditional engineering roles — Python, API integration, SQL, automation thinking, system architecture — are the same skills that underpin AI engineering at the implementation layer. The pivot is not a restart; it is a horizontal extension into a new application domain.

Backend Developer

→ AI Implementation Engineer / ML Infrastructure Engineer

Backend architecture experience maps directly to the service layer of AI applications: API orchestration, model endpoint management, latency optimisation, and the infrastructure that serves AI outputs to end users at scale.

QA / Test Engineer

→ AI Test Engineer / LLM Evaluation Specialist

The discipline of edge case thinking that defines good QA work is precisely what AI safety and model evaluation require. Adversarial testing, prompt injection detection, output regression testing, and hallucination rate monitoring are all extensions of QA methodology into a new domain.

BI / Data Analyst

→ Data Science / AI Analytics Engineer

SQL and data modelling skills transfer directly into the feature engineering and vector database query layer that underlies modern AI applications. A BI analyst who adds Python, vector database fluency, and RAG architecture knowledge has a complete profile for AI analytics roles.

DevOps / Platform Eng.

→ MLOps Engineer / AI Platform Engineer

The operationalisation of ML models — model versioning, deployment pipelines, A/B testing infrastructure, drift monitoring, and rollback mechanisms — is an extension of DevOps principles into the ML lifecycle. MLOps is one of the fastest-growing sub-specialisations within the AI engineering market.

Product Manager

→ AI Product Manager / AI Strategy Lead

The most in-demand non-engineering AI role in the 2025 market. PMs who understand AI capabilities and limitations — and can define product requirements that are technically achievable with current AI tooling — are structurally scarce. The pivot does not require coding fluency; it requires AI literacy deep enough to separate feasible from speculative.

The guiding principle for 2025 career pivot strategy: If you can debug a script, you can debug a model. The cognitive pattern of isolating a failure, forming a hypothesis about its cause, testing against edge cases, and iterating toward a fix is identical in both contexts. The domain knowledge is different. The engineering discipline is the same. The market is currently paying a $60K–$100K premium over general software engineering salaries to engineers who have made this connection and built the AI-specific skills on top of their existing foundation.

Wrappers vs. Real Applications: The Context Engineering Stack

The compensation gap between a $100K entry-level AI role and a $200K–$260K senior AI engineering role maps almost exactly onto one technical distinction: the ability to build a context engineering stack rather than an API wrapper. The market is oversupplied with engineers who can call an LLM API. It is structurally undersupplied with engineers who can build the retrieval, orchestration, and safety layers that make an AI application enterprise-deployable.

API Wrapper (Entry Level)

- ✗ Direct OpenAI / Anthropic API calls with no intermediate layer

- ✗ Static system prompts with no dynamic context injection

- ✗ No retrieval layer — model relies on training data alone

- ✗ No memory management — context window fills and truncates

- ✗ No safety layer — raw model output sent directly to users

This is the starting point, not the destination. API wrappers are buildable by anyone with basic Python knowledge. They command entry-level salaries and are immediately replicable by the next developer.

Context Engineering Stack (Elite Tier)

- ✓ RAG architecture: vector database retrieval injects private enterprise data into model context

- ✓ Orchestration layer (LangChain / LlamaIndex) manages multi-step reasoning and tool calls

- ✓ Context compaction: summarisation strategies prevent context window overflow

- ✓ Context isolation: role-based access control determines which data each user's context receives

- ✓ Safety layer: prompt injection detection, output filtering, adversarial testing protocols

This is what enterprises pay $200K–$260K for. The engineer who can build this stack is not building a chatbot — they are building an AI system that handles private enterprise data safely, reasons across multiple information sources, and maintains accuracy at production scale.

AI Safety: The Competitive Moat That Justifies the Top-Tier Premium

As enterprises move from AI pilots to AI production systems, the risk calculus changes. A chatbot prototype that occasionally produces incorrect output is an inconvenience. An enterprise AI system that handles HR records, financial data, or customer PII and occasionally produces incorrect or leaked output is a liability event. The engineers who can build safety into the architecture — not as an afterthought but as a foundational design constraint — are the ones who unlock the enterprise contracts that pay at the top of the market range.

Prompt Injection Attacks

What it addresses: Malicious inputs embedded in user queries that attempt to override system instructions or extract private data

An enterprise AI application that handles HR data, financial records, or customer PII is a direct target for prompt injection. An engineer who cannot demonstrate injection detection and mitigation in their portfolio is not deployable in regulated enterprise environments — which represent the majority of high-value AI implementation contracts.

Bias and Fairness Auditing

What it addresses: Systematic evaluation of model outputs across demographic, geographic, and categorical subgroups to identify differential treatment

The EU AI Act and emerging US AI regulation require documented bias assessments for high-risk AI applications. Engineers who can perform and document fairness audits are a regulatory compliance asset — not just an ethical preference. This skill has direct financial value in regulated industries.

Adversarial Testing

What it addresses: Structured attempts to break the system: edge case inputs, out-of-distribution queries, jailbreak attempts, and stress testing of safety constraints

Production AI systems encounter adversarial users by default. An engineer who has only tested their application on well-formed inputs has not tested their application. Adversarial testing methodology is the QA discipline applied to AI safety — and it is the skill that converts a functional prototype into a deployable enterprise product.

Context Isolation

What it addresses: Architectural controls that ensure each user or role only receives model context appropriate to their access level

In a multi-tenant enterprise RAG application, a junior analyst must not receive context that contains executive compensation data, even if that data exists in the same vector database. Context isolation is not a security afterthought — it is the foundational architectural requirement that makes enterprise RAG legally deployable.

Output Monitoring

What it addresses: Logging, sampling, and automated evaluation of model outputs in production to detect drift, hallucination rates, and policy violations

A model that performed well at launch will degrade over time as the world changes and its training data becomes stale. Output monitoring is the mechanism that surfaces this degradation before it becomes a business incident. Engineers who build monitoring into their systems from day one are demonstrating production maturity that justifies the senior-tier compensation premium.

The principle that defines the AI engineering opportunity in 2025: The barrier to entry is lowering for engineers with strong logical foundations. The rewards are increasing for engineers who can direct the machine's intent — who understand not just how to call an API, but how to build the context, safety, and orchestration architecture that makes an AI system trustworthy at enterprise scale. In a world where AI can write the code, the engineers who decide what the code should do, what data it should see, and what it must never do are the ones the market is paying $200K for. That is the pivot.

This article is part of the Career Pivot Navigator series from HéraAI — Instant Access to 5.8M+ Active Jobs Worldwide.

---

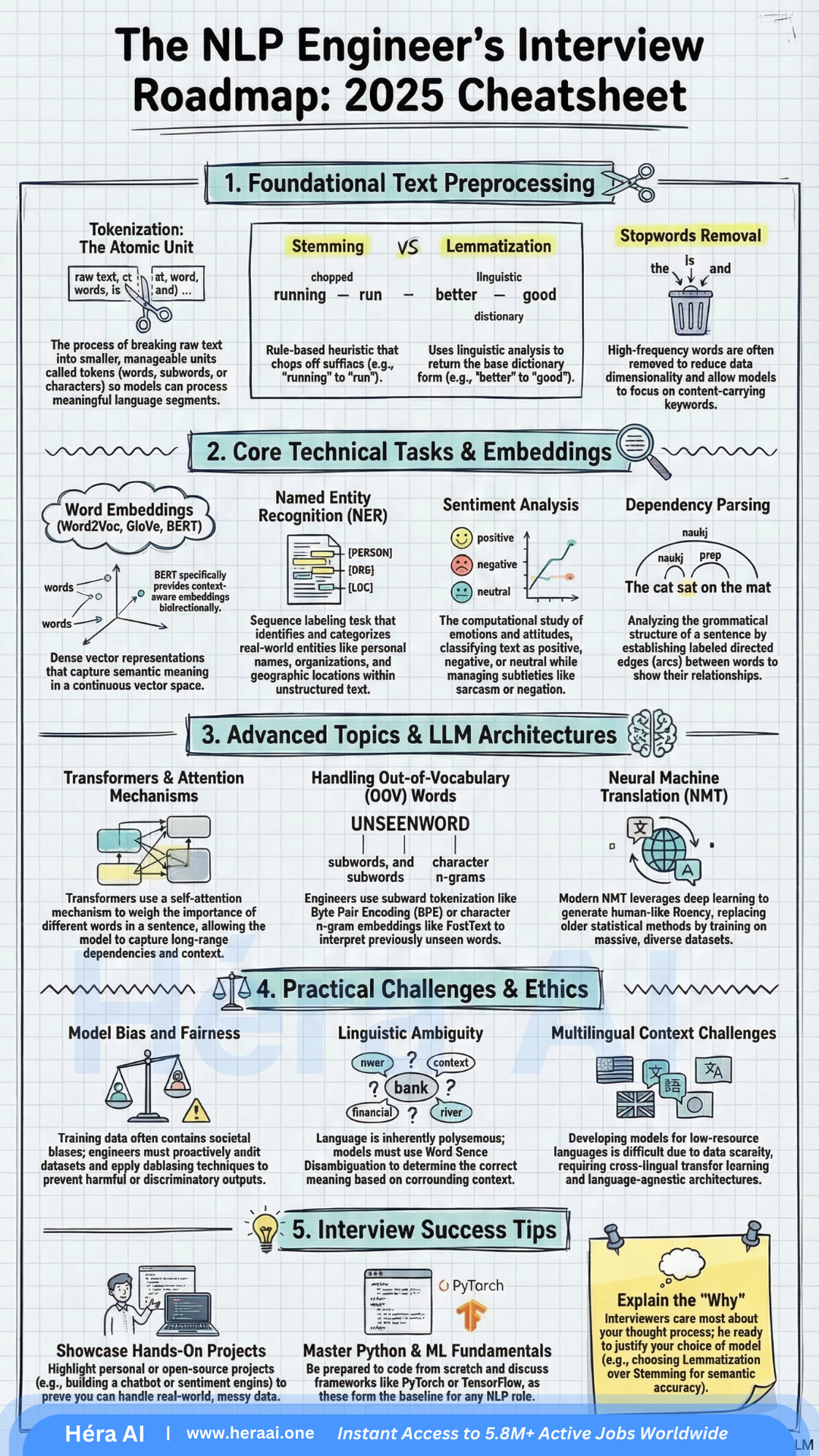

# The NLP Gold Rush: 5 Truths About the 2026 Job Market Every Candidate Needs to Know

Source: articles/career-pivot/nlp-blueprint-career-guide.mdx

The global NLP market is projected to surpass $201 billion by 2031. The opportunity isn't scarce — the talent to seize it is.

By Carrie Yu · HéraAI · March 15, 2026

The AI boom has created a landscape defined by equal parts immense opportunity and real confusion. While 'AI' has become a corporate buzzword, the most lucrative and stable career opportunities are concentrating within a specific discipline: Natural Language Processing.

NLP is the engine behind the Generative AI revolution — the technology allowing machines to grasp, interpret, and generate human language. And right now, the market is desperately short on people who can build it well. Surviving the 2026 hiring cycle requires more than a certificate. It requires a strategic understanding of how the technical and economic pieces actually fit together. Here are five truths that will change how you approach this market.

{/* Stats Cards */}

$201B

projected global NLP market by 2031

$116K

median salary for Junior NLP Engineers

$304K

ceiling for Director of Engineering roles

{/* Image — replace filename with actual image */}

1. The 'Junior' Label Is a Misnomer — And the Salary Floor Reflects That

One of the most persistent myths in tech hiring is that 'Junior' roles in AI are low-paying entry points. In the NLP world, that label describes what is, in practice, a high-impact engineering role with a salary floor most industries reserve for senior staff.

The reason the floor is this high comes down to one thing: human ambiguity. Even a baseline NLP role requires the ability to teach machines to parse the messiness of human intent — sarcasm, context shifts, cultural inference, contradictory phrasing. That's not a skill that scales easily.

Junior NLP Engineer

$116,000

median base salary

Senior NLP Researcher

$241,000

median base salary

Director of Engineering

$304,000

reported ceiling

What this means for you: These figures aren't just attractive compensation data — they reflect genuine talent scarcity. Companies are paying this much because they can't find enough people who can bridge computational linguistics and deep learning. That gap is your leverage.

2. Linguistic Intuition Is the Differentiator That Separates Good Candidates from Hired Ones

Thousands of candidates can list PyTorch and TensorFlow on their resume. Far fewer can explain why a model fails to handle sarcasm — or what it would take to fix it.

That distinction is linguistic intuition: the ability to reason about the gap between what a model processes and what a human actually means. It's not a soft skill. It's a technical design capability. The most effective NLP engineers understand the structural difference between syntax (how language is arranged) and semantics (what it actually means). That understanding directly shapes model architecture decisions — which training signals to weight, which evaluation metrics to trust, and where the model is likely to fail silently.

What Linguistic Intuition Looks Like in Practice

Failure diagnosis

Identifying why a model underperforms on negation, irony, or code-switching between languages.

Architecture decisions

Choosing the right tokenization and embedding strategy based on the linguistic properties of your data.

Evaluation design

Knowing which benchmark metrics actually reflect real-world language behavior versus those that reward overfitting.

Cross-cultural sensitivity

Understanding how idioms, humor, and register shift across demographics and languages.

Interview signal: When asked about model limitations, don't just describe the technical failure. Explain the linguistic phenomenon behind it. That's the answer that gets offers.

3. Bias Mitigation Is Now a Hard Engineering Skill — Not an Ethics Elective

The industry has moved from 'pure tech' to 'responsible tech.' In the 2026 hiring landscape, if you can't speak concretely to bias mitigation, you're a liability — particularly for roles in hiring, law enforcement, healthcare, and customer service, where biased model outputs carry legal and operational consequences.

The framing shift that matters: stop treating debiasing as an ethical consideration and start treating it as a technical requirement embedded in your engineering pipeline.

Bias Mitigation: The Technical Toolkit

Active Annotation

Auditing training data to systematically identify and flag prejudiced or unrepresentative language before model training begins.

Data Scrubbing

Removing or re-weighting data points that encode societal inequalities, with documented methodology that can be reviewed by stakeholders.

Evaluation Loops

Building detection metrics into the pre-deployment pipeline specifically designed to catch biased outputs across demographic subgroups.

Counterfactual Testing

Evaluating whether model outputs change when protected attributes (race, gender, age) are swapped in otherwise identical inputs.

The hiring reality: Senior hiring managers increasingly treat bias mitigation fluency as a minimum bar, not a bonus. Candidates who can walk through a concrete debiasing pipeline — with specific tools and metrics — stand out immediately.

4. Transformer Architecture Is the Baseline — Know It Beyond the Buzzwords

The 2026 interview room has moved past recurrent neural networks. The baseline expectation is a genuine understanding of the Transformer architecture: not just that it works, but why it outperforms earlier approaches and what its actual limitations are.

The key insight: Transformers solved the long-range dependency problem that made RNNs unreliable for complex language tasks. The self-attention mechanism allows the model to evaluate all tokens in a sequence simultaneously, rather than processing them sequentially and losing context over distance.

Core Transformer Concepts for 2026 Interviews

Self-Attention Mechanisms

How the model assigns importance weights across all tokens in a sequence simultaneously, enabling parallel processing and long-range context capture.

Bidirectional Context (BERT)

Why reading left-to-right and right-to-left simultaneously produces richer contextual representations than unidirectional models.

Cross-lingual Transfer Learning

Applying knowledge from a high-resource language to improve performance in a lower-resource one — increasingly required for global product deployments.

Fine-tuning vs. Pretraining trade-offs

When to adapt an existing model versus train from scratch, and how to justify that decision under compute and data constraints.

💡 Expert Tip — The Lemmatization vs. Stemming Question

This question appears in production-level interviews to test whether you understand linguistic accuracy versus computational efficiency trade-offs.

Stemming

Faster but rule-based — it often produces non-standard roots (e.g., 'comput' from 'computing') that create noise in downstream tasks.

Lemmatization ✓

Uses morphological analysis to return a valid dictionary form (e.g., 'run' from 'running') — the only defensible choice for high-stakes applications where output quality is non-negotiable.

The answer that wins the room: state the trade-off explicitly, then defend lemmatization with a specific production context.

5. Portfolios Are the New Resume — Build Projects That Prove Production Readiness

Theoretical knowledge is a commodity. In 2026, what separates shortlisted candidates from the rest is demonstrated ability to build systems that handle real-world messiness — not clean benchmark datasets.

The rise of Shadow AI (employees using AI tools outside official IT channels) and AI Democratization means hiring managers are increasingly evaluating whether you can build the tools others are already using. Your portfolio is where you prove that.

Project 1

Sentiment Analysis with edge case handling

Specifically addressing negation ('not bad'), sarcasm, and mixed-sentiment documents. Shows you've thought beyond the tutorial.

Project 2

Conversational Agent using LangChain

Demonstrates you can build the exact infrastructure driving enterprise AI adoption — and that you understand multi-turn context management.

Project 3

Named Entity Recognition (NER)

Structured data extraction from unstructured text. Highly valued in legal, healthcare, and finance applications.

Project 4

Cross-lingual model evaluation

Testing a multilingual model on a low-resource language shows awareness of the next frontier in NLP deployment.

🎯 Interview Tactic — The Out-of-Vocabulary (OOV) Problem

A major red flag for hiring managers is a candidate who can't articulate a strategy for handling words the model has never seen. The answer interviewers are looking for:

Byte Pair Encoding (BPE)

Breaks unknown words into subword units, enabling the model to construct a reasonable representation from familiar components.

FastText

Uses character n-grams to build word representations, making it robust to misspellings and morphological variations.

Mentioning either approach, with a clear explanation of when you'd choose one over the other, signals genuine production experience.

The portfolio principle: Every project you include should answer one question for the hiring manager: 'Can this person handle the failures that don't appear in documentation?' Show the edge cases you solved, not just the pipelines you built.

The Future Belongs to Engineers Who Ensure AI Truly Understands Language

As AI Democratization accelerates, the number of people who can use the tools will grow rapidly. The number who can ensure those tools are accurate, ethical, and genuinely language-aware will remain scarce.

The engineers who define this next phase won't just generate text. They'll be part linguist, part engineer, part strategist — capable of navigating the boundary between what a model outputs and what a user actually needs. The field is moving toward multilingual embeddings, complex discourse analysis, and real-time adaptive systems. The candidates who thrive will be the ones who understand not just how these systems work, but why they sometimes don't — and what it takes to fix them.

At HéraAI, that's the level of strategic clarity we help engineers develop. The NLP market in 2026 rewards engineers who combine technical depth with linguistic intuition — and who can prove it through shipped projects, not just credentials.

This article is part of the Career Pivot Navigator series from HéraAI — Instant Access to 5.8M+ Active Jobs Worldwide.

---

# The 5 Python Skills That Actually Get You Hired in 2026

Source: articles/career-pivot/python-core-skills-toolkit-2026.mdx

Most candidates know Python. Fewer can explain their code under pressure. Here's the difference — and how to close it before your next technical screen.

By Carrie Yu · HéraAI · March 14, 2026

Recruiters are not impressed by GitHub repos, bootcamp certificates, or the phrase 'strong Python skills.' What passes a technical screen in 2026 is something more specific: the ability to explain your reasoning under pressure. Why did you choose that data structure? What happens if this function receives an unexpected type? What does Python actually do with that try-except block?

The five skill areas below are not exhaustive Python knowledge — they are the specific domains that separate candidates who fumble under questioning from those who don't. Each one has a surface-level answer that gets you partway through a screen, and a deeper answer that actually gets you hired.

{/* Stats Cards */}

5

core Python skill areas that determine interview outcomes

else

the try-except block most candidates don't know exists

LEGB

the scope resolution rule that decides every closure question

__

double underscore — the OOP concept that filters senior candidates

{/* Image — replace filename with actual image */}

{/* Overview Table */}

The Five Skills at a Glance — What's Really Being Tested

Before going deep on each skill, it's worth mapping what interviewers are actually evaluating. The questions aren't about definitions. They're designed to expose whether you understand trade-offs, can predict behaviour, and have built things that work in the real world.

| # |

Skill Area |

The Real Interview Trap |

What's Actually Being Tested |

| 01 |

Built-in Data Types |

Why can't a list be a dictionary key? |

Predicting behaviour from mutability rules — not reciting type lists |

| 02 |

Control Flow & Exceptions |

Do you know the else block in try-except? |

Writing intentional, production-aware error handling — not reactive patching |

| 03 |

Functions & Scope |

Trace this closure: what value does the nested function return? |

LEGB mastery + first-class functions + API design instincts |

| 04 |

Object-Oriented Design |

Why would you use inheritance here, and what are the risks? |

Systems thinking — encapsulation, extension, and trade-off reasoning |

| 05 |

Standard Library Fluency |

Which stdlib modules did you use in your last project, and why? |

Engineering maturity — using what exists before building from scratch |

The meta-skill beneath all five: Technical interviews in 2026 are judgment tests, not memory tests. The strongest candidates don't just answer the question — they volunteer the trade-off. 'I used a tuple here because it's immutable and hashable, which matters because...' That instinct to lead with why signals the engineering maturity that senior tracks are looking for.

01 — Built-in Data Types: It's About Mutability, Not Memorisation

Most candidates can list Python's built-in types. Fewer can predict what happens when those types interact with the language's fundamental mechanisms — and that distinction is exactly what interviewers are probing when they ask about data types.

The core question is always some version of: 'Can you reason about behaviour from first principles?' The most commonly used probe is the dictionary key question, because it requires understanding the relationship between mutability, hashability, and Python's internal dictionary implementation.

✓ Immutable (hashable → valid dict key)

- • int, float, complex

- • str

- • tuple

- • frozenset

- • bytes

- • bool

✗ Mutable (not hashable → invalid dict key)

- • list

- • dict

- • set

- • bytearray

- • Custom objects (default)

- • array.array

Interview principle: Immutable types are hashable because their value cannot change after creation — so a consistent hash is always producible. Mutable types cannot guarantee a stable hash, which would break dictionary lookup integrity. The reasoning chain interviewers want to hear: mutable types can change their content after creation → a consistent hash cannot be guaranteed → Python's dict requires stable hashes for key lookup → mutable types cannot be dictionary keys.

Career hack from HéraAI analysis: In interviews, always lead with why you chose a data structure — not just what it does. 'I used a frozenset here because the collection won't change and I need to use it as a dictionary key later' signals the kind of forward-thinking that distinguishes a mid-level candidate from a senior one.

02 — Control Flow & Exception Handling: Stop Writing Fragile Code

The try-except block is one of the most underused tools in a junior developer's arsenal, and one of the most incorrectly used in a mid-level one. The pattern most candidates know — try and except — is the minimum. The full four-block structure is what interviewers use to filter candidates who write intentional code from those who write reactive code.

try

Code that might raise an exception

Keep this block narrow — wrap only the specific operation that can fail, not the entire function body.

except

Handles the error if one is raised

Always catch specific exception types (except ValueError:), never bare except:. Catching everything hides bugs and makes debugging production incidents significantly harder.

else

Runs only if no exception was raised ⭐

The filter block most candidates don't know exists. Use it for logic that should only run on success — separating the 'happy path' from error handling makes intent explicit.

finally

Always runs — exception or not

Resource cleanup: closing files, releasing locks, terminating connections. Equivalent to using a context manager (with statement), which is the preferred pattern for resource management.

The context manager signal: In behavioral interviews, describing a time you used a with statement for resource cleanup — file handles, database connections, network sockets — signals production awareness. The with statement implements the context manager protocol (__enter__ and __exit__), which guarantees cleanup even if an exception is raised. This is the preferred pattern over try/finally for resource management.

03 — Functions & Scope: The One Most Candidates Skip

LEGB is four letters that appear in more Python interview questions than any other single concept. Python resolves variable names in exactly one order: Local → Enclosing → Global → Built-in. If you can't trace through a closure and explain precisely which value a nested function will use — without running the code — you will fail live coding screens at the mid-level and above.

Functions & Scope — What Strong Candidates Know

LEGB in practice

Python looks for a variable name in the local scope first, then in any enclosing function scopes (for closures), then at the module level, then in Python's built-in namespace. The first match wins. If no match is found, a NameError is raised.

First-class functions

In Python, functions are objects. They can be passed as arguments, assigned to variables, stored in data structures, and returned from other functions. This is the foundation of decorators, callbacks, and every functional programming pattern in Python.

Lambda functions

Single-expression anonymous functions (lambda x: x * 2). Know when to use them — sort keys, map/filter, short callbacks — and when not to. Anything requiring more than one expression, a docstring, or meaningful readability should be a named function.

*args and **kwargs

Not just syntax — a signal that you understand flexible API design. *args collects positional arguments into a tuple; **kwargs collects keyword arguments into a dict. Demonstrating them in an interview shows you can build APIs that don't break when requirements change.

Closures and the nonlocal keyword

A nested function that references a variable from its enclosing scope creates a closure. The nonlocal keyword allows the nested function to rebind that variable. This is the mechanism behind many factory functions and stateful decorators.

Interview technique: When asked to write a function, proactively mention scope and argument design. 'I'm using **kwargs here to keep the API flexible — callers can pass any additional parameters without breaking the function signature' signals the kind of API-level thinking that senior interviewers reward.

04 — Object-Oriented Programming: Think in Systems, Not Scripts

OOP questions in Python interviews are not about knowing what a class is. Every candidate knows what a class is. The interview is probing whether you understand why OOP exists — what problems it solves, when it creates more complexity than it resolves, and what the specific Python implementation details mean for how you design systems.

| Concept |

What It Is |

What the Interview Is Actually Probing |

| self |

The instance reference |

self is the specific object being operated on — not the class. Every instance method receives it as the first argument automatically. Candidates who can't articulate this distinction haven't built enough with classes under pressure. |

| __init__ |

The constructor |

Called automatically when a class is instantiated. Sets up the object's initial state. Confusing __init__ with __new__ (which creates the object before __init__ runs) is a common senior-level probe. |

| super() |

Parent class accessor |

Calls the parent class method without hardcoding the parent's name. Critical for clean inheritance chains — especially in multiple inheritance where Method Resolution Order (MRO) determines which parent is called. |

| _name |

Protected convention |

Signals 'internal use' to other developers. Not enforced by Python — it's a documentation convention. The underscore says 'you can access this, but you shouldn't unless you know what you're doing.' |

| __name |

Name mangling |

Becomes _ClassName__name internally. Prevents accidental override in subclasses. This is the one interviewers use to separate candidates who've read about access control from those who've debugged it in production. |

Design instinct that interviewers notice: When asked to design a system in an interview, default to OOP and explain your encapsulation decisions explicitly. 'I'm keeping this attribute private with a double underscore because it should only be modified through the update() method — direct access would bypass the validation logic' demonstrates exactly the architectural awareness that distinguishes a systems designer from a script writer.

05 — Standard Library Fluency: The Mark of a Self-Sufficient Developer

Knowledge of Python's standard library is a proxy for production experience. Candidates who reach for the right built-in module before writing a custom implementation signal two things: they've built enough real systems to know what already exists, and they understand that shipping fast and reliably matters more than writing everything from scratch.

os / sys

System + file operations

File path construction, environment variable access, command-line argument parsing. Essential for scripting, DevOps tooling, and any automation role. Know os.path.join() vs. pathlib.Path — the latter is now the preferred modern pattern.

json / csv

Data serialization

Parse and emit structured data. Every API integration and data pipeline uses these. Know the difference between json.loads() (string → object) and json.load() (file → object). Confusing them in a live screen is a red flag.

datetime

Time and date handling

Date arithmetic, timezone conversion, formatting with strftime() and strptime(). Underestimated until you're debugging a production incident at 2am caused by timezone-naive datetime objects in a UTC system.

math / random

Numerical and probabilistic

Mathematical constants (pi, e), power and log functions, random sampling and shuffling. Appears in ML-adjacent roles, simulation tasks, and anywhere approximation or stochastic behaviour is required.

collections

Specialised data structures

Counter, defaultdict, deque, OrderedDict, namedtuple. Candidates who reach for these instead of reimplementing them from scratch signal strong library awareness and production instincts.

Portfolio documentation signal: In your portfolio projects, document which standard library modules you used and why you chose them over third-party alternatives. 'I used pathlib.Path instead of os.path because the object-oriented interface is cleaner for complex path operations and it's the recommended modern pattern' demonstrates engineering maturity in a way that raw code does not.

Judgment, Not Memory

The five skill areas in this article share a common thread: they are all domains where the surface-level answer is easy to produce and insufficient to pass. Knowing that a tuple is immutable is the entry point. Understanding why that immutability matters for hashability, dictionary keys, and memory behaviour — and being able to articulate that reasoning without prompting — is what gets you hired.

Mastering these five areas doesn't just prepare you for interviews. It changes how you write code in practice: with more deliberate data structure selection, more intentional error handling, more thoughtful API design, and a clearer instinct for when to use what the language already provides rather than building it again. At HéraAI, the Interview Cheatsheet Vault is built to develop exactly this kind of depth — not just answers to specific questions, but the reasoning fluency that holds up when the interviewer pushes back.

This article is part of the Interview Cheatsheet Vault series from HéraAI — Instant Access to 5.8M+ Active Jobs Worldwide.

---

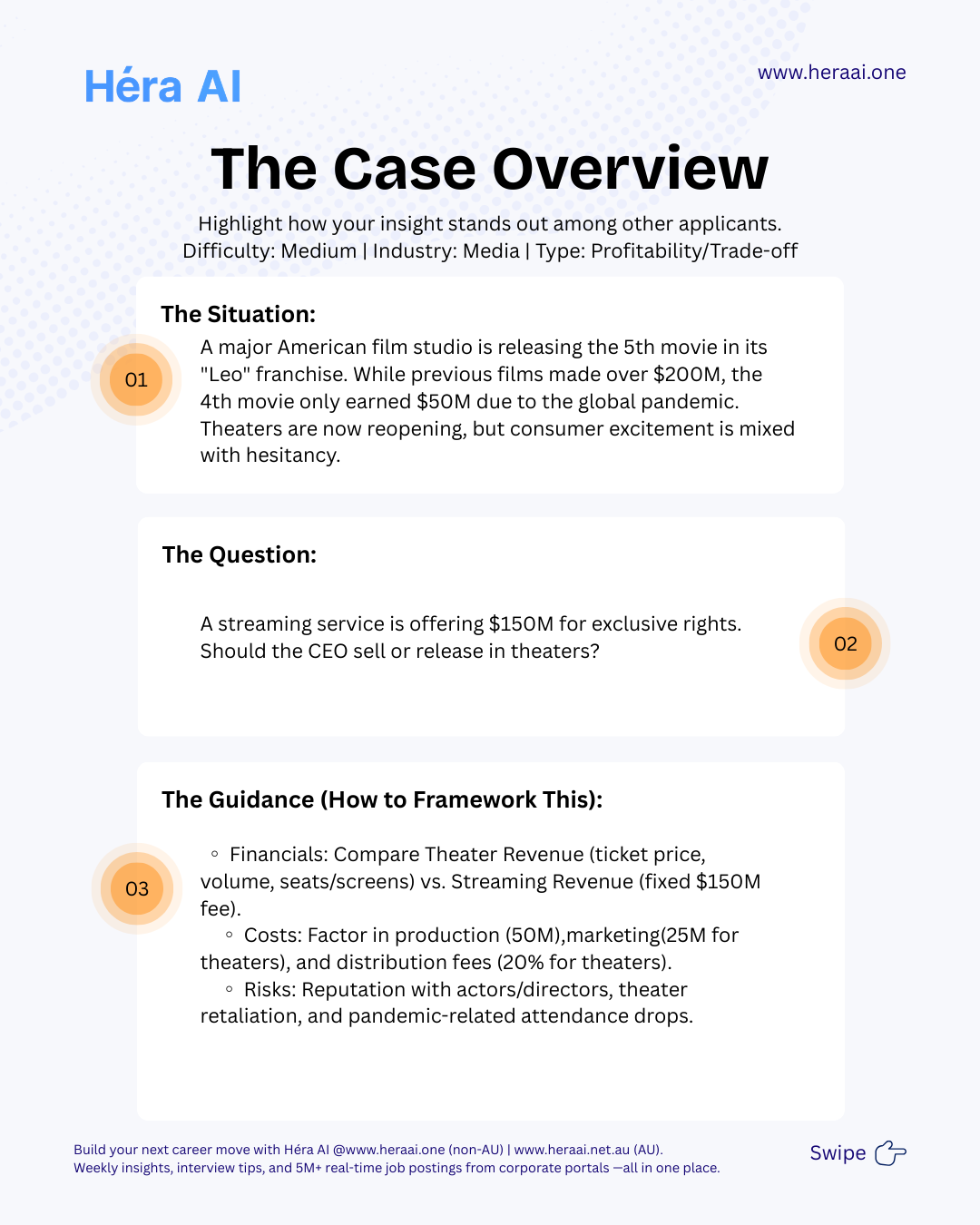

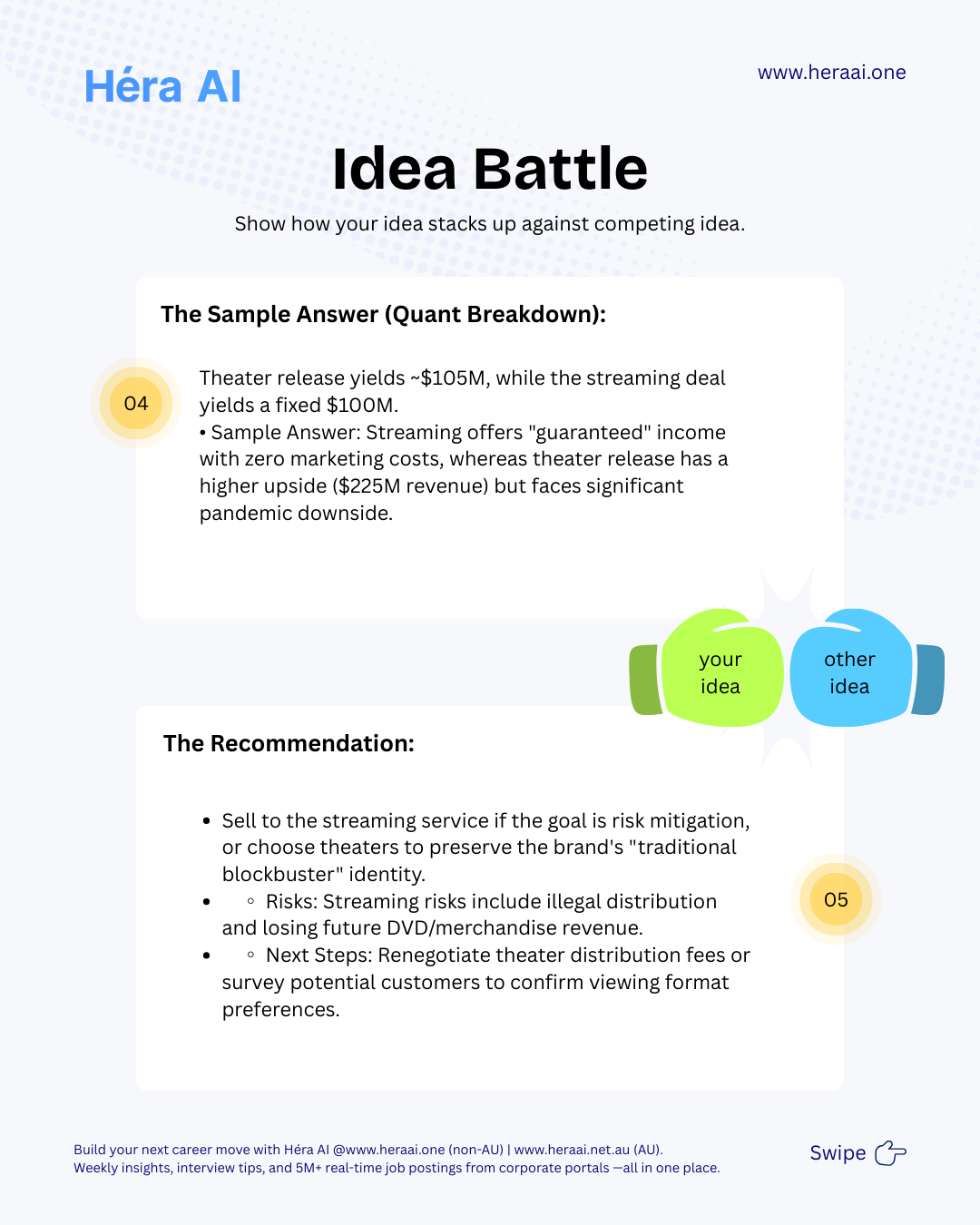

# Case 1 - Strategy Consulting Practice

Source: articles/case-strategy/case-1.mdx

#### Case 1: PE Portfolio Strategy — A Positive NPV Does Not Automatically Mean Go

Day 1 of 30. An AT Kearney-style private equity case in the automotive sensor market. The math is the starting point. The investment recommendation requires three layers of thinking that the NPV model cannot provide.

Case 1 establishes the foundational principle that runs through every case in this 30-day series: data supports the recommendation; it does not make it. The case is a PE portfolio strategy decision — whether to invest in a business operating in the automotive sensor market. The surface question is whether the NPV is positive at a 10% cost of capital. The real question is what a positive NPV means for an actual investment decision.

The automotive sensor market is growing at approximately 5% annually, driven by structural factors — electrification, autonomous driving requirements, and OEM safety mandates. The market is fragmented, meaning no single player has locked up a dominant position. These two facts — structural growth and fragmentation — make the market attractive on a surface read. The deeper analysis requires three layers of thinking that sit above the financial model.

PE strategy cases test a specific analytical maturity: the ability to move from 'the NPV is positive' to 'here is what the NPV means, here are the assumptions that drive it, here are the conditions under which the investment thesis holds, and here is what would have to be true for the investment to succeed.' That sequence — calculation to interpretation to recommendation — is the consulting skill that Day 1 is designed to introduce.

### Three Layers of Thinking Above the NPV Model

The NPV calculation is the starting point of the analysis, not the conclusion. Every PE strategy case requires three distinct analytical layers, each answering a different question about the investment decision.

The principle that Day 1 establishes for the entire 30-case series: 'Running an NPV analysis is relatively straightforward. Explaining what the result means for a real investment decision is not. Consulting is not about perfect calculations — it is about using data to support a clear, defensible recommendation. The NPV tells you whether the investment creates value under your assumptions. Strategy tells you whether the assumptions are right and whether the investment fits the broader portfolio. Both are required.'

### What PE Strategy Cases Are Really Testing

### The 5-Step Framework

The meta-lesson that Case 1 is designed to establish — foundational for every case in this series: A positive NPV does not automatically mean go. Especially in PE-style investment cases, the real value comes from asking: Does this opportunity fit the firm's broader strategy? What risks matter most in a fragmented competitive landscape? What would have to be true for this investment to succeed? Those questions do not live in a financial model — they come from structured thinking. This is the principle that governs every case that follows in this 30-day series.

---

# Case 10 - M&A Due Diligence

Source: articles/case-strategy/case-10.mdx

#### Case 10: Airlines and the Channel Tunnel — When a Superior Substitute Changes the Rules

The Channel Tunnel did not enter the London–Paris market on price. It entered on convenience, productivity, and total journey time — dimensions where airlines are structurally disadvantaged. The strategic response is not a fare war. It is a segmentation.

Case 10 is a competitive strategy case with a framing trap. The obvious response to a new competitor is defensive pricing — cut fares, increase frequency, match the threat. In this case, that response is wrong. The Channel Tunnel entered the London–Paris market not as a cheaper alternative but as a superior alternative on the dimensions that business travellers value most: door-to-door convenience, in-transit productivity, and schedule reliability. Competing on price against a structurally superior product on those dimensions destroys value without restoring share.

The more important analytical move is recognising that this is not a winner-takes-all market. Airlines and rail serve meaningfully different customer segments with meaningfully different deciding factors. City-centre point-to-point business travellers prefer rail. Passengers connecting to onward long-haul flights need airlines. Loyal corporate frequent flyers can be retained through programme investment. Leisure travellers split by price and preference. A single strategic response applied to all of these segments simultaneously is a strategy for none of them.

The case tests whether candidates can identify the correct competitive metric (door-to-door time, not in-air speed), segment the market before recommending, name the price war trap, and identify the durable advantages that rail cannot replicate. These four analytical moves — in sequence — produce a recommendation that is both credible and actionable.

### Competitive Dimension Analysis: Who Wins — and Why

The first analytical step is to map every relevant competitive dimension and determine where each mode holds a genuine advantage. The instinctive framing — airlines are faster — holds for in-air time only. On every other dimension that business travellers value, the picture is more complex.

The metric reframe that defines the entire case: 'Airlines are faster in the air. But the customer does not experience the journey as time in the air. They experience it as time from leaving their office to sitting in their meeting. On that metric — door-to-door — rail is competitive or superior on the London–Paris route. The strategic implication follows directly: airlines cannot defend their position by emphasising flight duration. They must defend it by emphasising what happens at the edges of the journey that rail cannot replicate — specifically, the connection to an onward global network.'

### Customer Segmentation: Five Segments, Five Different Outcomes

The London–Paris route is not a single market. It is five overlapping markets with different deciding factors, different competitive outcomes, and different implications for airline strategy. A recommendation that treats all passengers identically will be wrong for all of them.

### Strategic Response Options: What Works and What Destroys Value