10 min read

The AI Engineer's Survival Guide: 4 RAG Truths That Separate Hired Candidates from the Rest

In 2026, companies aren't just hiring engineers who can prompt an LLM. They're hiring engineers who can build reliable, grounded, and production-ready systems. That system is RAG — and most candidates can't explain it past the acronym.

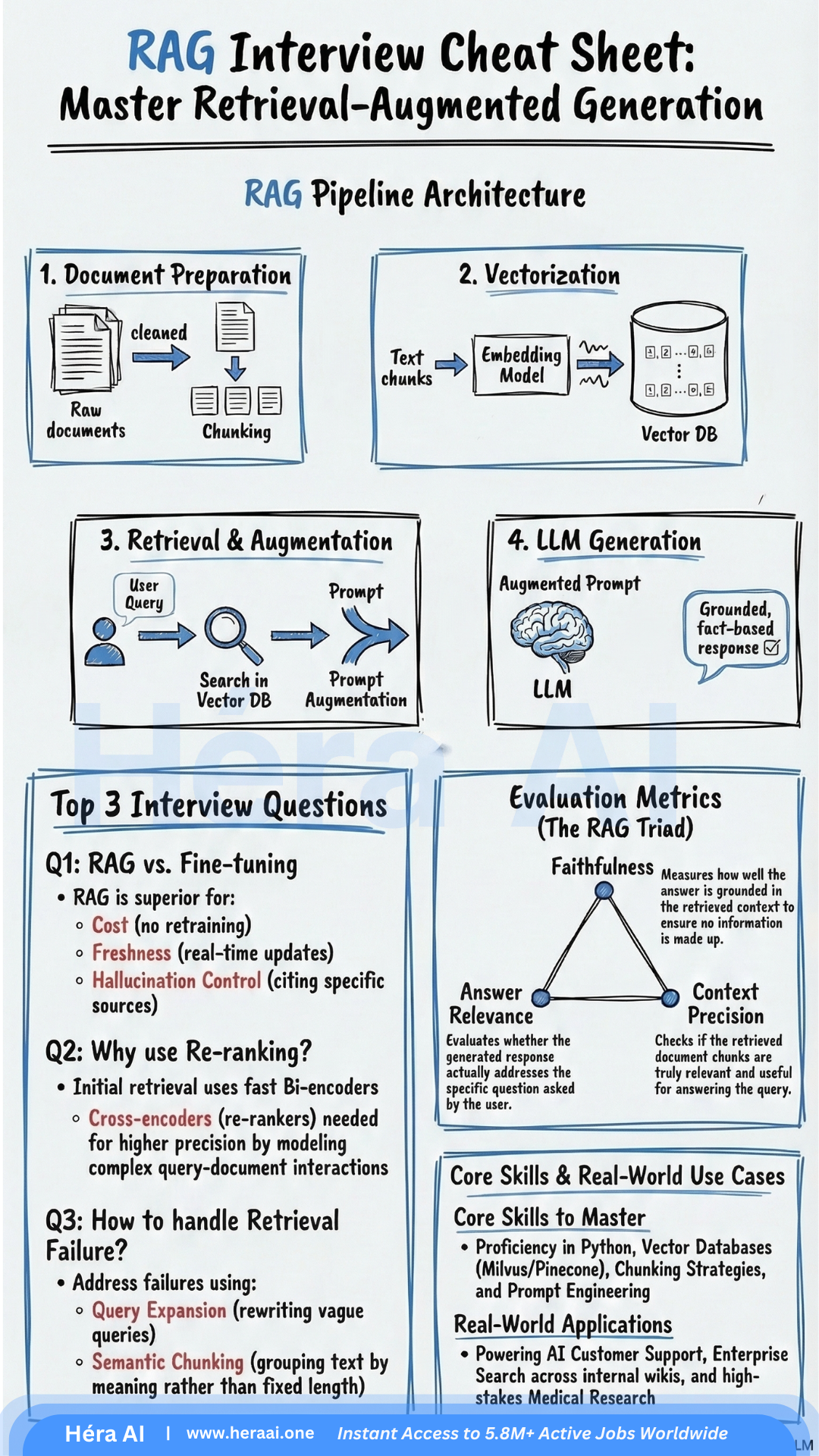

The AI job market has shifted faster than most candidates' preparation has. Retrieval-Augmented Generation is no longer a niche architecture topic — it's a baseline expectation for any engineer building AI-powered products. At HéraAI, we've analyzed over 100 technical interview patterns to identify exactly what separates candidates who understand RAG conceptually from those who've built it in production. Here are four truths you need before your next interview.

1. RAG Exists Because LLMs Alone Aren't Production-Ready — Know Why

Most candidates describe RAG as 'connecting an LLM to a database.' That's not wrong, but it's the kind of answer that gets you to the next question — not the offer. The answer that signals production experience explains the problem RAG solves: knowledge cutoffs and hallucination.

LLMs are powerful but frozen in time. They generate text that sounds authoritative, even when it's wrong. RAG solves both problems by providing a live connection to external, authoritative data at inference time — without the cost and latency of retraining.

2. The RAG Workflow Has Five Stages — Weak Candidates Collapse Them Into Two

Ask most candidates to walk through RAG and they'll say: 'You retrieve relevant chunks and feed them to the LLM.' That's stages 2 and 3 of 5. The stages they skip are exactly the ones that fail in production.

3. Search Is Not One Thing — and Hybrid Is the Production Standard

A question that consistently trips up mid-level candidates: 'What's the difference between sparse and dense retrieval?' The answer matters because the retrieval layer is where most RAG systems quietly fail.

4. Evaluation Is the Skill That Separates Engineers from Prototypers

You can build a RAG demo in an afternoon. Building one that you can prove is working — and diagnose when it isn't — is what companies are actually hiring for. That requires fluency in the RAG Triad.

Most candidates have heard of these metrics. Few can explain what a low score on each one means diagnostically — and that's the follow-up question interviewers always ask.

The Engineers Who Get Hired Can Explain What Breaks — Not Just What Works

RAG is no longer a differentiator on its own. In 2026, virtually every AI product team has a RAG system. What differentiates candidates is the ability to reason about failure: why retrieval returns noise, why generation drifts from context, and how to measure it systematically.

At HéraAI, that's the level of production-grade thinking we help engineers develop — not just the architecture, but the diagnostic mindset behind it.

Next issue: Advanced RAG architectures: Agentic RAG, self-querying retrievers, and graph-augmented retrieval — what the next interview frontier looks like.

Subscribe. We cut through the noise so you don't have to.

— HéraAI Team