Every year, thousands of data science graduates flood the job market armed with impressive degrees — and get eliminated in the first phone screen. The problem isn't ability. It's the absence of a mental framework.

We did a deep dive into a 2026 Data Science Interview Roadmap designed specifically for advanced graduates — and found four insights that most candidates never realize until it's too late. The gap between candidates who get offers and those who don't isn't usually technical depth. It's the presence or absence of a structured way to think about problems under pressure.

1. Technical Learning Has a Phase Order — Skipping Ahead Will Cost You

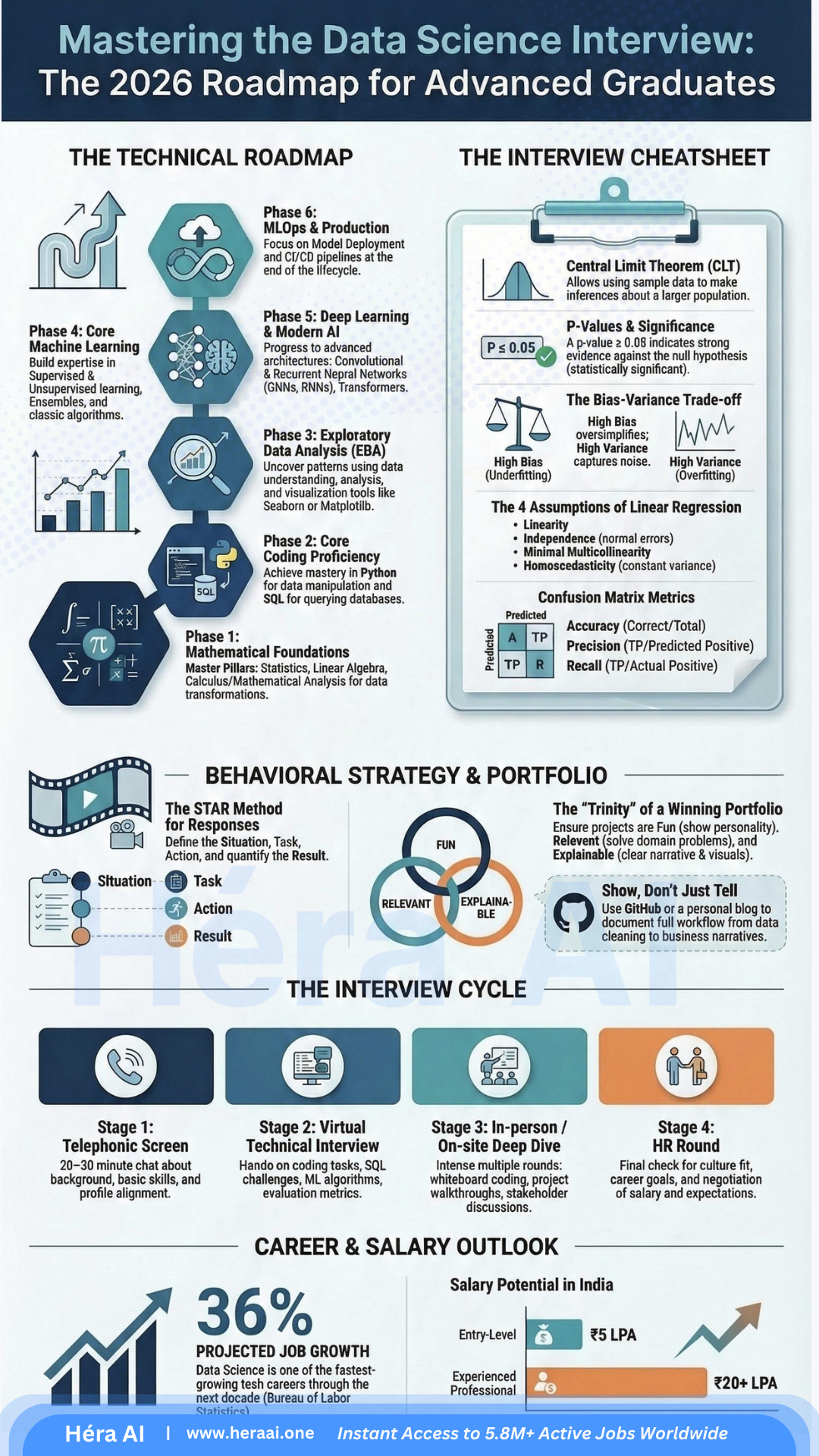

The roadmap breaks technical growth into six phases: Mathematical Foundations → Coding Proficiency → Exploratory Data Analysis → Core Machine Learning → Deep Learning → MLOps and Production. Most candidates assume Phase 4 is where interviews happen. It isn't. Interviewers at top-tier companies frequently probe Phase 1 — and they do it deliberately, because it's where most candidates have the weakest foundations.

| The Six Phases — and Where Candidates Underinvest | |

|---|---|

| Phase 1 · Math | Linear algebra, statistics, calculus. The layer most candidates deprioritize. The layer most senior interviewers probe first. |

| Phase 2 · Coding | Python, SQL, version control. Table stakes — but the quality of your code under pressure reveals more than your portfolio does. |

| Phase 3 · EDA | Exploratory data analysis before modeling. Candidates who skip this step in interviews reveal they've only worked with clean, pre-processed datasets. |

| Phase 4 · Core ML | Regression, classification, ensemble methods. What most candidates over-prepare for. |

| Phase 5 · Deep Learning | Neural networks, transformers, LLMs. Increasingly tested, but rarely the deciding factor at interview. |

| Phase 6 · MLOps | Production deployment, monitoring, CI/CD for ML. The phase that separates researchers from engineers — and increasingly, what late-stage interviews test. |

Interview Signal

Dedicate at least 20% of your prep time back to Phase 1. This is exactly where most competitors cut corners — and where you can pull ahead fastest. When an interviewer asks you to explain the bias-variance tradeoff, they want mathematical reasoning, not a rehearsed buzzword definition.

2. Statistics Is a Trap — Because Interviewers Go Two Layers Deep

Here's the deceptively correct answer every candidate gives: a p-value ≤ 0.05 indicates strong evidence against the null hypothesis. Textbook accurate. And in 2026, completely insufficient at a top-tier company. The follow-up questions are getting sharper — and they're designed to surface whether you understand the concept or just the definition.

"What's the difference between statistical significance and practical significance?"

💡 Expert Tip — The Two-Layer Rule

3. The Portfolio Trinity Is Harder to Execute Than It Looks

The roadmap introduces a portfolio framework: Fun × Relevant × Explainable. Most candidates read this and think their current portfolio qualifies. It almost certainly doesn't — because checking one dimension at the expense of the others is the most common portfolio failure mode.

Interview Signal

GitHub combined with a personal blog documenting the full workflow — from raw data to business narrative — is what 'Show, Don't Just Tell' actually means. The blog post is not optional decoration. It's where you prove you can communicate findings to a non-technical stakeholder.

4. The Four Interview Rounds Each Have a Hidden Scoring Dimension

From phone screen to offer, each stage of the data science interview cycle tests a stated competency and a hidden one. Candidates who only prepare for the surface layer quietly lose points on the dimension the interviewer is actually scoring.

| Stage | Surface Assessment | Hidden Assessment |

|---|---|---|

| Phone Screen | Background introduction | Clarity of self-positioning. Can you explain your journey and your target role in 90 seconds without wandering? |

| Virtual Technical | SQL / ML algorithm questions | Thinking visibility under pressure. Are you narrating your reasoning, or silently computing? Interviewers score the process, not just the answer. |

| On-site Deep Dive | Whiteboard coding / case study | Stakeholder communication ability. Can you explain a modeling decision to a non-technical audience without losing precision? |

| HR Round | Culture fit conversation | Consistency of career narrative. Does your story hold together across everything you've said? Inconsistencies surface here. |

🎯 Interview Tactic — Prepare for the Hidden Layer Explicitly

The Gap Between Entry-Level and Experienced Isn't Years — It's the Quality of Your Mental Framework

Data science is projected to be one of the fastest-growing careers of the decade, with a 4× salary gap between entry-level and experienced professionals. That gap isn't primarily about years of experience. It's about the systematic quality of how you think about problems, communicate under pressure, and build things that work in the real world.

At HéraAI, we believe career competitiveness in the AI era starts with honest self-assessment and a structured preparation path — not just more flashcards.

This article is part of the Career Intelligence Series from HéraAI — Instant Access to 5.8M+ Active Jobs Worldwide.