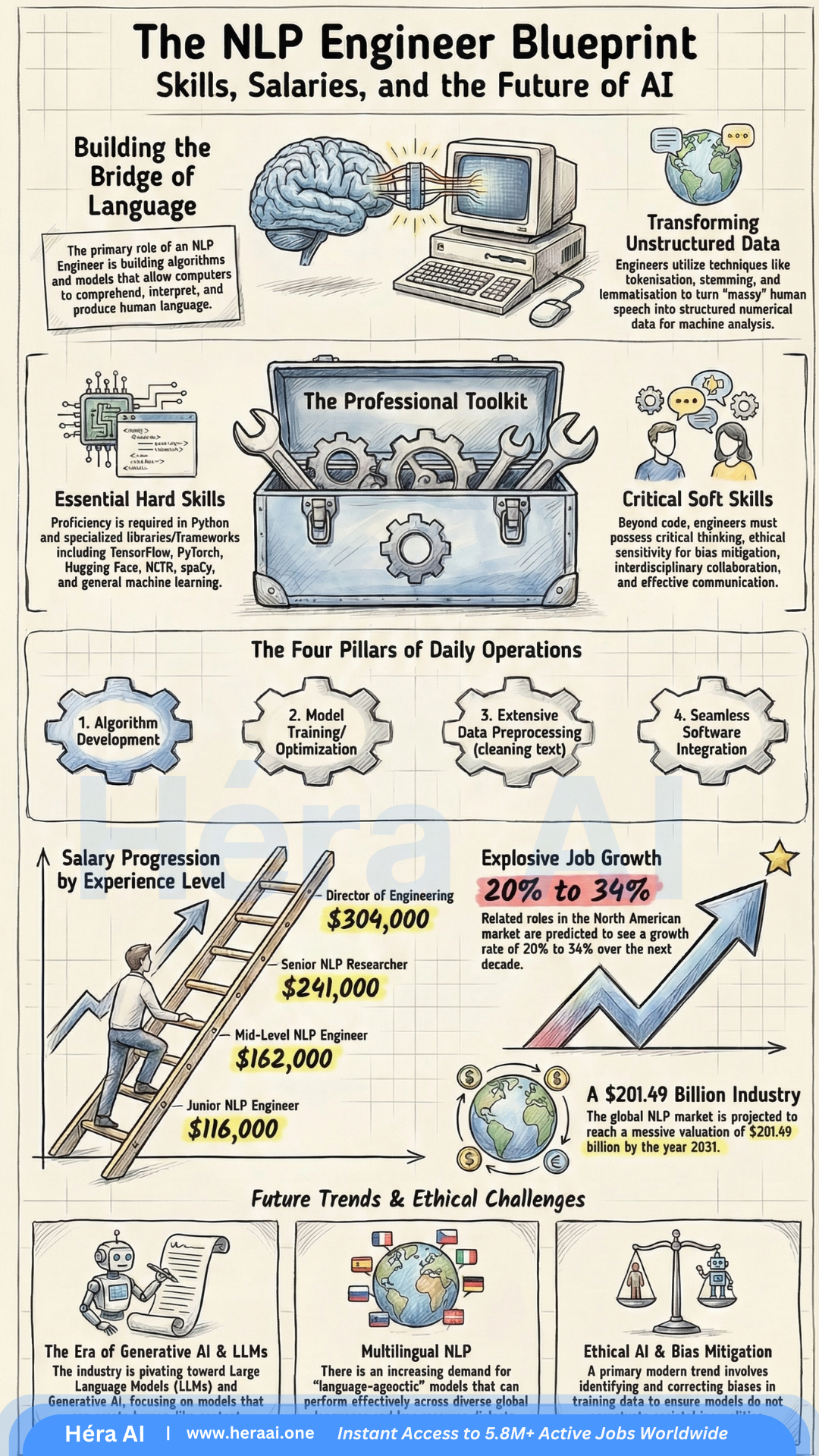

The global NLP market is projected to surpass $201 billion by 2031. The opportunity isn't scarce — the talent to seize it is.

The AI boom has created a landscape defined by equal parts immense opportunity and real confusion. While 'AI' has become a corporate buzzword, the most lucrative and stable career opportunities are concentrating within a specific discipline: Natural Language Processing.

NLP is the engine behind the Generative AI revolution — the technology allowing machines to grasp, interpret, and generate human language. And right now, the market is desperately short on people who can build it well. Surviving the 2026 hiring cycle requires more than a certificate. It requires a strategic understanding of how the technical and economic pieces actually fit together. Here are five truths that will change how you approach this market.

1. The 'Junior' Label Is a Misnomer — And the Salary Floor Reflects That

One of the most persistent myths in tech hiring is that 'Junior' roles in AI are low-paying entry points. In the NLP world, that label describes what is, in practice, a high-impact engineering role with a salary floor most industries reserve for senior staff.

The reason the floor is this high comes down to one thing: human ambiguity. Even a baseline NLP role requires the ability to teach machines to parse the messiness of human intent — sarcasm, context shifts, cultural inference, contradictory phrasing. That's not a skill that scales easily.

What this means for you: These figures aren't just attractive compensation data — they reflect genuine talent scarcity. Companies are paying this much because they can't find enough people who can bridge computational linguistics and deep learning. That gap is your leverage.

2. Linguistic Intuition Is the Differentiator That Separates Good Candidates from Hired Ones

Thousands of candidates can list PyTorch and TensorFlow on their resume. Far fewer can explain why a model fails to handle sarcasm — or what it would take to fix it.

That distinction is linguistic intuition: the ability to reason about the gap between what a model processes and what a human actually means. It's not a soft skill. It's a technical design capability. The most effective NLP engineers understand the structural difference between syntax (how language is arranged) and semantics (what it actually means). That understanding directly shapes model architecture decisions — which training signals to weight, which evaluation metrics to trust, and where the model is likely to fail silently.

What Linguistic Intuition Looks Like in Practice

Interview signal: When asked about model limitations, don't just describe the technical failure. Explain the linguistic phenomenon behind it. That's the answer that gets offers.

3. Bias Mitigation Is Now a Hard Engineering Skill — Not an Ethics Elective

The industry has moved from 'pure tech' to 'responsible tech.' In the 2026 hiring landscape, if you can't speak concretely to bias mitigation, you're a liability — particularly for roles in hiring, law enforcement, healthcare, and customer service, where biased model outputs carry legal and operational consequences.

The framing shift that matters: stop treating debiasing as an ethical consideration and start treating it as a technical requirement embedded in your engineering pipeline.

Bias Mitigation: The Technical Toolkit

The hiring reality: Senior hiring managers increasingly treat bias mitigation fluency as a minimum bar, not a bonus. Candidates who can walk through a concrete debiasing pipeline — with specific tools and metrics — stand out immediately.

4. Transformer Architecture Is the Baseline — Know It Beyond the Buzzwords

The 2026 interview room has moved past recurrent neural networks. The baseline expectation is a genuine understanding of the Transformer architecture: not just that it works, but why it outperforms earlier approaches and what its actual limitations are.

The key insight: Transformers solved the long-range dependency problem that made RNNs unreliable for complex language tasks. The self-attention mechanism allows the model to evaluate all tokens in a sequence simultaneously, rather than processing them sequentially and losing context over distance.

Core Transformer Concepts for 2026 Interviews

💡 Expert Tip — The Lemmatization vs. Stemming Question

This question appears in production-level interviews to test whether you understand linguistic accuracy versus computational efficiency trade-offs.

5. Portfolios Are the New Resume — Build Projects That Prove Production Readiness

Theoretical knowledge is a commodity. In 2026, what separates shortlisted candidates from the rest is demonstrated ability to build systems that handle real-world messiness — not clean benchmark datasets.

The rise of Shadow AI (employees using AI tools outside official IT channels) and AI Democratization means hiring managers are increasingly evaluating whether you can build the tools others are already using. Your portfolio is where you prove that.

🎯 Interview Tactic — The Out-of-Vocabulary (OOV) Problem

A major red flag for hiring managers is a candidate who can't articulate a strategy for handling words the model has never seen. The answer interviewers are looking for:

The portfolio principle: Every project you include should answer one question for the hiring manager: 'Can this person handle the failures that don't appear in documentation?' Show the edge cases you solved, not just the pipelines you built.

The Future Belongs to Engineers Who Ensure AI Truly Understands Language

As AI Democratization accelerates, the number of people who can use the tools will grow rapidly. The number who can ensure those tools are accurate, ethical, and genuinely language-aware will remain scarce.

The engineers who define this next phase won't just generate text. They'll be part linguist, part engineer, part strategist — capable of navigating the boundary between what a model outputs and what a user actually needs. The field is moving toward multilingual embeddings, complex discourse analysis, and real-time adaptive systems. The candidates who thrive will be the ones who understand not just how these systems work, but why they sometimes don't — and what it takes to fix them.

At HéraAI, that's the level of strategic clarity we help engineers develop. The NLP market in 2026 rewards engineers who combine technical depth with linguistic intuition — and who can prove it through shipped projects, not just credentials.

This article is part of the Career Pivot Navigator series from HéraAI — Instant Access to 5.8M+ Active Jobs Worldwide.