General software hiring is cautious. AI engineer demand is at fever pitch. The gap between those two sentences is the largest career opportunity in the current market — and it is accessible without a PhD.

The current conversation about AI is dominated by displacement anxiety. The data tells a different story. What is happening in the labour market is not job erasure — it is job evolution, and the demand curve for engineers who can implement AI in production has effectively decoupled from the rest of the software hiring market. General tech hiring remains cautious. AI engineer hiring is accelerating.

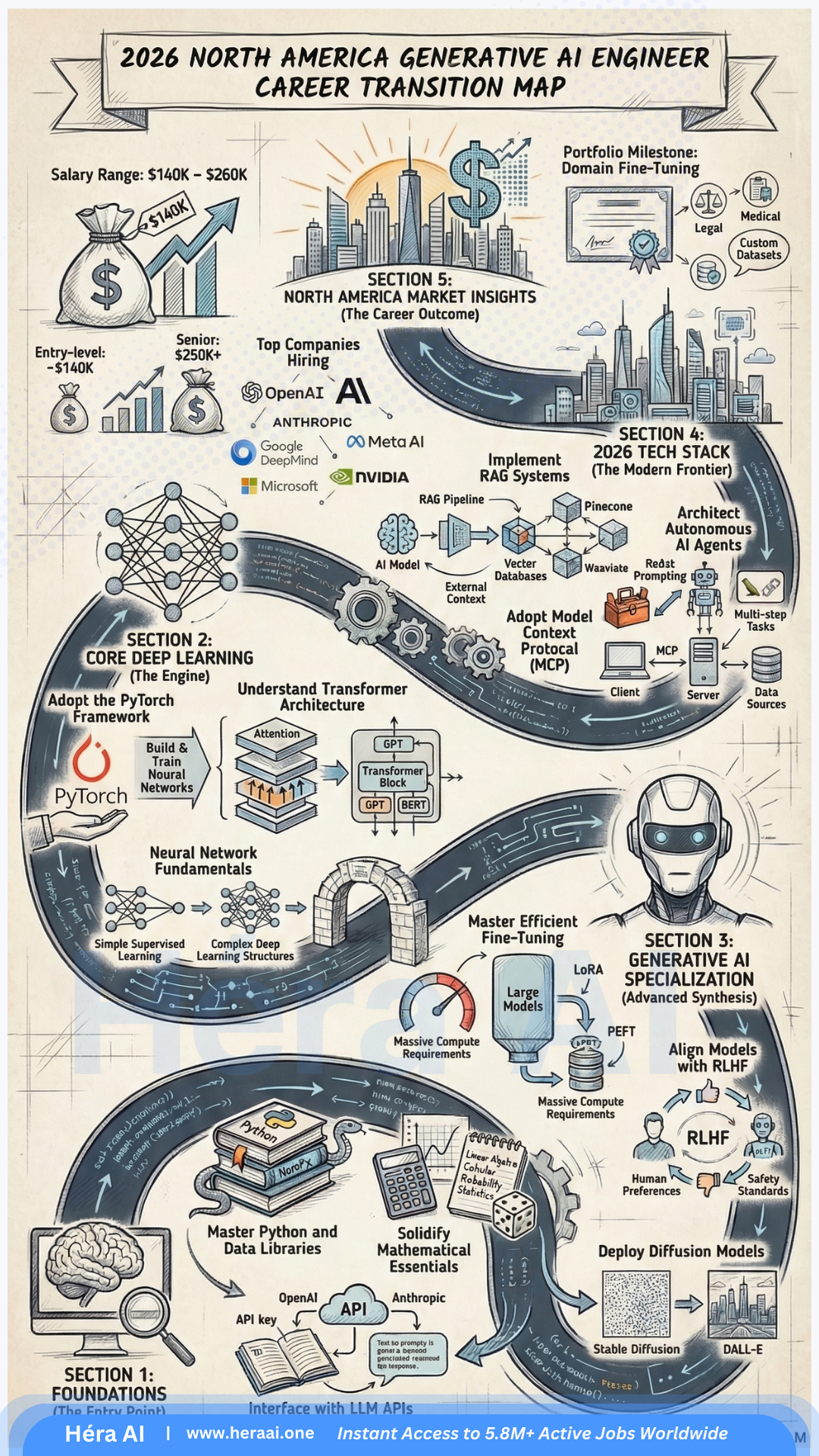

The $200,000 average salary for Generative AI Engineers in 2025 reflects a genuine scarcity of talent that can move beyond API wrappers into production-grade AI systems — engineers who understand RAG architecture, context engineering, orchestration frameworks, and AI safety. The market is pricing that combination at a premium because it is structurally rare, and because the demand for it is arriving from every industry simultaneously.

AI Researcher vs. AI Engineer: The Distinction That Opens the Market

The most persistent barrier to entering AI engineering is a misconception: that the field requires the ability to invent new neural architectures. It does not. A fundamental distinction has emerged in the 2025 market between the AI Researcher — who trains models from scratch — and the AI Engineer, who builds production applications using pre-trained models and existing AI tooling. These are different professions with different entry requirements.

| Dimension | AI / ML Researcher | AI Engineer ✓ The Pivot Path |

|---|---|---|

| Primary output | Novel model architectures, academic papers, SOTA benchmark improvements | Production applications that deliver measurable business value using existing models |

| Relationship to models | Designs and trains models from scratch; requires deep mathematical foundations | Selects, integrates, and optimises pre-trained models; focuses on application architecture |

| Typical background | PhD or research Master's in ML, mathematics, or computational statistics | Strong engineering fundamentals; Python proficiency; API and system integration experience |

| Market demand (2025) | Concentrated at frontier labs (OpenAI, Anthropic, Google DeepMind, Meta AI) | Broad demand across every industry sector integrating AI into products and workflows |

| Compensation ceiling | $350K–$500K+ at frontier labs; highly concentrated and competitive supply | $140K–$260K average; $200K median; rapidly expanding demand across non-frontier employers |

| Accessible via pivot? | No — requires multi-year research training from foundational principles | Yes — engineers with strong backend, QA, or BI foundations can pivot within 6–18 months |

The reframe that changes the calculus for every mid-career engineer: You are not tasked with reinventing the wheel. You are building the vehicle that uses the wheel to deliver enterprise value. The AI Engineer's job is implementation: selecting the right model for the task, building the architecture that feeds it the right data, ensuring it operates safely at production scale, and connecting its outputs to the business workflow that creates value. This is an engineering problem, not a research problem — and it is accessible to anyone with strong engineering fundamentals.

The Trojan Horse Strategy: Your Existing Skills Are the Entry Ticket

Strategic career switchers understand that they are not starting from zero. The IT skills that define competency in traditional engineering roles — Python, API integration, SQL, automation thinking, system architecture — are the same skills that underpin AI engineering at the implementation layer. The pivot is not a restart; it is a horizontal extension into a new application domain.

The guiding principle for 2025 career pivot strategy: If you can debug a script, you can debug a model. The cognitive pattern of isolating a failure, forming a hypothesis about its cause, testing against edge cases, and iterating toward a fix is identical in both contexts. The domain knowledge is different. The engineering discipline is the same. The market is currently paying a $60K–$100K premium over general software engineering salaries to engineers who have made this connection and built the AI-specific skills on top of their existing foundation.

Wrappers vs. Real Applications: The Context Engineering Stack

The compensation gap between a $100K entry-level AI role and a $200K–$260K senior AI engineering role maps almost exactly onto one technical distinction: the ability to build a context engineering stack rather than an API wrapper. The market is oversupplied with engineers who can call an LLM API. It is structurally undersupplied with engineers who can build the retrieval, orchestration, and safety layers that make an AI application enterprise-deployable.

API Wrapper (Entry Level)

- ✗ Direct OpenAI / Anthropic API calls with no intermediate layer

- ✗ Static system prompts with no dynamic context injection

- ✗ No retrieval layer — model relies on training data alone

- ✗ No memory management — context window fills and truncates

- ✗ No safety layer — raw model output sent directly to users

Context Engineering Stack (Elite Tier)

- ✓ RAG architecture: vector database retrieval injects private enterprise data into model context

- ✓ Orchestration layer (LangChain / LlamaIndex) manages multi-step reasoning and tool calls

- ✓ Context compaction: summarisation strategies prevent context window overflow

- ✓ Context isolation: role-based access control determines which data each user's context receives

- ✓ Safety layer: prompt injection detection, output filtering, adversarial testing protocols

AI Safety: The Competitive Moat That Justifies the Top-Tier Premium

As enterprises move from AI pilots to AI production systems, the risk calculus changes. A chatbot prototype that occasionally produces incorrect output is an inconvenience. An enterprise AI system that handles HR records, financial data, or customer PII and occasionally produces incorrect or leaked output is a liability event. The engineers who can build safety into the architecture — not as an afterthought but as a foundational design constraint — are the ones who unlock the enterprise contracts that pay at the top of the market range.

Prompt Injection Attacks

Bias and Fairness Auditing

Adversarial Testing

Context Isolation

Output Monitoring

The principle that defines the AI engineering opportunity in 2025: The barrier to entry is lowering for engineers with strong logical foundations. The rewards are increasing for engineers who can direct the machine's intent — who understand not just how to call an API, but how to build the context, safety, and orchestration architecture that makes an AI system trustworthy at enterprise scale. In a world where AI can write the code, the engineers who decide what the code should do, what data it should see, and what it must never do are the ones the market is paying $200K for. That is the pivot.

This article is part of the Career Pivot Navigator series from HéraAI — Instant Access to 5.8M+ Active Jobs Worldwide.